וובינר שלישי בנושא: בלוקצ’יין

מתוך סדרת וובינרים בנושא: “טכנולוגיות מתקדמות” פרטיות ואתגרי העתיד”. הוובנר התקיים ב 17.2.2025 בהשתתפות פרופ’ אלי בן־ששון, מייסד שותף ומנכ”ל StarkWare Industries בהנחיית: עו”ד רבקי דב”ש

וובינר שני בנושא: נתונים סינתטיים ודיסאינפורמציה

מתוך סדרת וובינרים בנושא: “טכנולוגיות מתקדמות” פרטיות ואתגרי העתיד”. הוובנר התקיים ב 1.2.2025 עם נציגי איגוד האינטרנט הישראלי – עידן רינג וד”ר אסף וינר בהנחיית: עו”ד רבקי דב”ש

FPF-Israel Annual recap-2024 in Review

Overview As 2024 draws to a close, we reflect on a year of unprecedented challenges, set against the backdrop of political, social, and security crises that have shaken the Middle […]

FPF – Israel Conference at Cyber Week 2024 – AI Regulation & Data Protection

Event Recap: On June 26, 2024, FPF – Israel held a Cyber Week Conference on: From AI Regulation Around the Globe to Data Protection in Time of Conflict Background: Once […]

Co-host event: DEVELOPMENTS IN GLOBAL LEGISLATION

We are excited to invite you to a co-host event by Shibolet Law Firm, Networking Advertising Initiative (NAI), FPF – Israel Tech Policy Institute on: ‘Developments in Global Legislation – […]

AI Governance in the Wake of ChatGPT – Policy and Governance

FPF – Israel Tech Policy Institute is thrilled and proud to be partnering with #CyberWeek TLV 2023 once again! Future of Privacy Forum CEO Jules Polonetsky and Senior Fellow and Partner at Goodwin Omer Tene will be moderating […]

Artificial Intelligence in Healthcare and Social Justice: Barriers and Responses

It is universally accepted that everyone has the right to the enjoyment of the highest attainable standard of physical and mental health. The right to health implies various other entitlements, […]

Can Artificial Intelligence mitigate the Long-Term Care crisis?

by Dr. Sivan Tamir* Society seems to be facing a long-term care (LTC) crisis. LTC is a collective term for various services (such as assistance in daily living activities; home […]

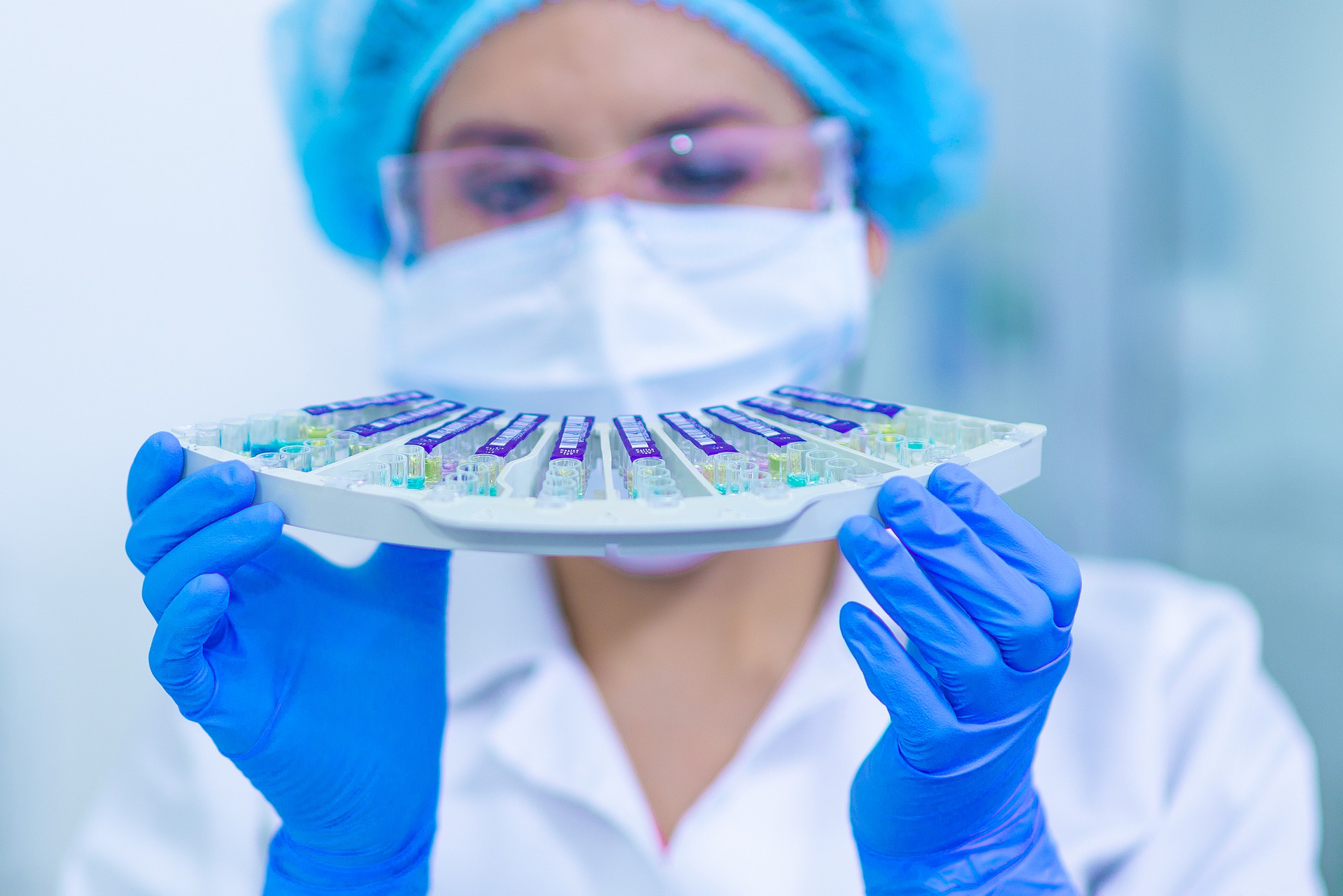

Event Recap – AI in IVF (7th June 2021)

On June 7th, we held a first-of-its-kind webinar (in Hebrew) on AI in IVF – Clinic, Lab, Technology and Ethics, where the following aspects of the novel introduction of artificial intelligence […]

Implementing Artificial Intelligence in the Healthcare Field – some ethical concerns

The development and implementation of Artificial Intelligence (AI)-based tools in the healthcare field seems to be continuously increasing. In fact, it would seem like there is no limit to human ingenuity when it comes to artificial intelligence.